Image Generation Agent

This guide demonstrates how to create an Image Generation Agent that can generate images based on text descriptions using the chat protocol. The agent is compatible with ASI:1 Chat and can process natural language requests to generate images.

Overview

In this example, you'll learn how to build a uAgent that can:

- Accept text descriptions through the chat protocol

- Generate images using ASI:One image API

- Return generated image URLs directly in chat responses

- Send generated images back to the user.

For a basic understanding of how to set up an ASI:One compatible agent, please refer to the ASI:One Compatible Agents guide first.

Message Flow

The communication between the User, Chat Interface, and Image Generator Agent proceeds as follows:

-

User Query

- 1: The user submits a text description of the desired image through ASI:1 Chat.

-

Query Processing

- 2: The Chat Interface forwards the user's description to the Image Generator Agent as a

ChatMessage.

- 2: The Chat Interface forwards the user's description to the Image Generator Agent as a

-

Message Acknowledgement

- 3: The agent immediately sends a

ChatAcknowledgementto confirm receipt of the message.

- 3: The agent immediately sends a

-

Image Generation

- 4.1 and 4.2: The agent processes the text description using ASI:One image generation.

- 5.1 and 5.2: The generated image URL is prepared for chat rendering.

-

Response & Resource Sharing

- 6: The agent sends the generated image back to the Chat Interface as Markdown image content in a

ChatMessage.

- 6: The agent sends the generated image back to the Chat Interface as Markdown image content in a

-

User Receives Image

- 7: The Chat Interface displays the generated image to the user.

Implementation

In this example, we will create an agent and its associated files on our local machine that communicate using the chat protocol. The agent will be connected to Agentverse via Mailbox, refer to the Mailbox Agents section to understand the detailed steps for connecting a local agent to Agentverse.

Create a new directory named "image-generation" and create the following files:

mkdir image-generation #Create a directory

cd image-generation #Navigate to the directory

touch agent.py # Main agent file with integrated chat protocol and message handlers for ChatMessage and ChatAcknowledgement

touch models.py # Image generation models and functions

1. Image Generation Implementation

The models.py file implements ASI:One image generation, with fallback handling for base64 image payloads and upload to tmpfiles for URL-based rendering.

import os

import requests

import base64

from uagents import Model

from dotenv import load_dotenv

load_dotenv()

ASI_API_KEY = os.getenv("ASI_LLM_KEY") or os.getenv("ASI1_API_KEY")

if ASI_API_KEY is None:

raise ValueError("You need to provide ASI_LLM_KEY (or ASI1_API_KEY).")

class ImageRequest(Model):

image_description: str

class ImageResponse(Model):

image_url: str

def upload_to_tmpfiles(image_bytes: bytes, filename: str = "asi1_image.png") -> str:

try:

response = requests.post(

"https://tmpfiles.org/api/v1/upload",

files={"file": (filename, image_bytes, "image/png")},

timeout=120,

)

response.raise_for_status()

response_data = response.json()

except requests.RequestException as e:

raise RuntimeError(f"Tmpfiles upload failed: {e}") from e

raw_url = response_data.get("data", {}).get("url")

if not raw_url:

raise RuntimeError(f"Tmpfiles upload returned no URL: {response_data}")

# Convert page URL to direct download URL for easier image rendering in chat clients.

return raw_url.replace("http://tmpfiles.org/", "https://tmpfiles.org/dl/")

def generate_image(prompt: str) -> str:

url = "https://api.asi1.ai/v1/image/generate"

payload = {

"model": "asi1",

"prompt": prompt,

"size": "auto",

}

headers = {

"Authorization": f"Bearer {ASI_API_KEY}",

"Content-Type": "application/json",

}

try:

response = requests.post(url, json=payload, headers=headers, timeout=60)

if not response.ok:

raise requests.HTTPError(

f"{response.status_code} Error from ASI image API: {response.text}",

response=response,

)

response_data = response.json()

except requests.RequestException as e:

raise RuntimeError(f"Image generation request failed: {e}") from e

image_url = response_data.get("image_url") or response_data.get("url")

if not image_url:

data_items = response_data.get("data", [])

if data_items and isinstance(data_items, list):

first_item = data_items[0] if data_items else {}

image_url = first_item.get("url")

if not image_url and first_item.get("b64_json"):

image_bytes = base64.b64decode(first_item["b64_json"])

image_url = upload_to_tmpfiles(image_bytes)

if not image_url:

raise RuntimeError(f"ASI image API returned no image URL: {response_data}")

return image_url

2. Image Generator Agent Setup

The agent.py file is the core of your application with integrated chat protocol functionality and contains message handlers for ChatMessage and ChatAcknowledgement protocols. It serves as the main control center that:

- Handles message handlers for

ChatMessageandChatAcknowledgementprotocols - Processes image generation requests using integrated chat protocol

Note: If you want to add advanced features such as rate limiting or agent health checks, you can refer to the agent setup section in the ASI:One Compatible Agent guide.

import asyncio

import os

from datetime import datetime, timezone

from uuid import uuid4

from uagents import Agent, Context, Protocol

from uagents.experimental.quota import QuotaProtocol, RateLimit

from uagents_core.models import ErrorMessage

# Import chat protocol components

from uagents_core.contrib.protocols.chat import (

chat_protocol_spec,

ChatMessage,

ChatAcknowledgement,

TextContent,

EndSessionContent,

StartSessionContent,

)

from models import ImageRequest, ImageResponse, generate_image

AGENT_SEED = os.getenv("AGENT_SEED", "image-generator-agent-seed-phrase-testing")

AGENT_NAME = os.getenv("AGENT_NAME", "Image Generator Agent")

PORT = 8000

agent = Agent(

name=AGENT_NAME,

seed=AGENT_SEED,

port=PORT,

mailbox=True,

)

# Create the chat protocol

chat_proto = Protocol(spec=chat_protocol_spec)

def create_text_chat(text: str) -> ChatMessage:

return ChatMessage(

timestamp=datetime.now(timezone.utc),

msg_id=uuid4(),

content=[TextContent(type="text", text=text)],

)

def create_end_session_chat() -> ChatMessage:

return ChatMessage(

timestamp=datetime.now(timezone.utc),

msg_id=uuid4(),

content=[EndSessionContent(type="end-session")],

)

# Chat protocol message handler

@chat_proto.on_message(ChatMessage)

async def handle_message(ctx: Context, sender: str, msg: ChatMessage):

# Send acknowledgement

await ctx.send(

sender,

ChatAcknowledgement(

timestamp=datetime.now(timezone.utc),

acknowledged_msg_id=msg.msg_id

),

)

# Process message content

for item in msg.content:

if isinstance(item, StartSessionContent):

ctx.logger.info(f"Got a start session message from {sender}")

continue

elif isinstance(item, TextContent):

ctx.logger.info(f"Got a message from {sender}: {item.text}")

prompt = item.text

try:

image_url = await asyncio.to_thread(generate_image, prompt)

await ctx.send(

sender,

create_text_chat(

"Image generated successfully.\n\n"

f"\n\n"

),

)

except Exception as err:

ctx.logger.error(err)

await ctx.send(

sender,

create_text_chat(

"Sorry, I couldn't process your request. Please try again later."

),

)

return

await ctx.send(sender, create_end_session_chat())

else:

ctx.logger.info(f"Got unexpected content from {sender}")

# Chat protocol acknowledgement handler

@chat_proto.on_message(ChatAcknowledgement)

async def handle_ack(ctx: Context, sender: str, msg: ChatAcknowledgement):

ctx.logger.info(f"Got an acknowledgement from {sender} for {msg.acknowledged_msg_id}")

# Optional: Rate limiting protocol for direct requests

proto = QuotaProtocol(

storage_reference=agent.storage,

name="Image-Generation-Protocol",

version="0.1.0",

default_rate_limit=RateLimit(window_size_minutes=60, max_requests=30),

)

# Optional: Direct request handler for structured requests

@proto.on_message(ImageRequest, replies={ImageResponse, ErrorMessage})

async def handle_request(ctx: Context, sender: str, msg: ImageRequest):

ctx.logger.info("Received image generation request")

try:

image_url = await asyncio.to_thread(generate_image, msg.image_description)

ctx.logger.info("Successfully generated image")

await ctx.send(sender, ImageResponse(image_url=image_url))

except Exception as err:

ctx.logger.error(err)

await ctx.send(sender, ErrorMessage(error=str(err)))

# Register protocols

agent.include(chat_proto, publish_manifest=True)

agent.include(proto, publish_manifest=True)

if __name__ == "__main__":

agent.run()

Setting up Environment Variables

Make sure to set the following environment variables:

ASI_LLM_KEY(orASI1_API_KEY): Your ASI:One API key for image generationAGENT_SEED: (Optional) Custom seed for your agentAGENT_NAME: (Optional) Custom name for your agent

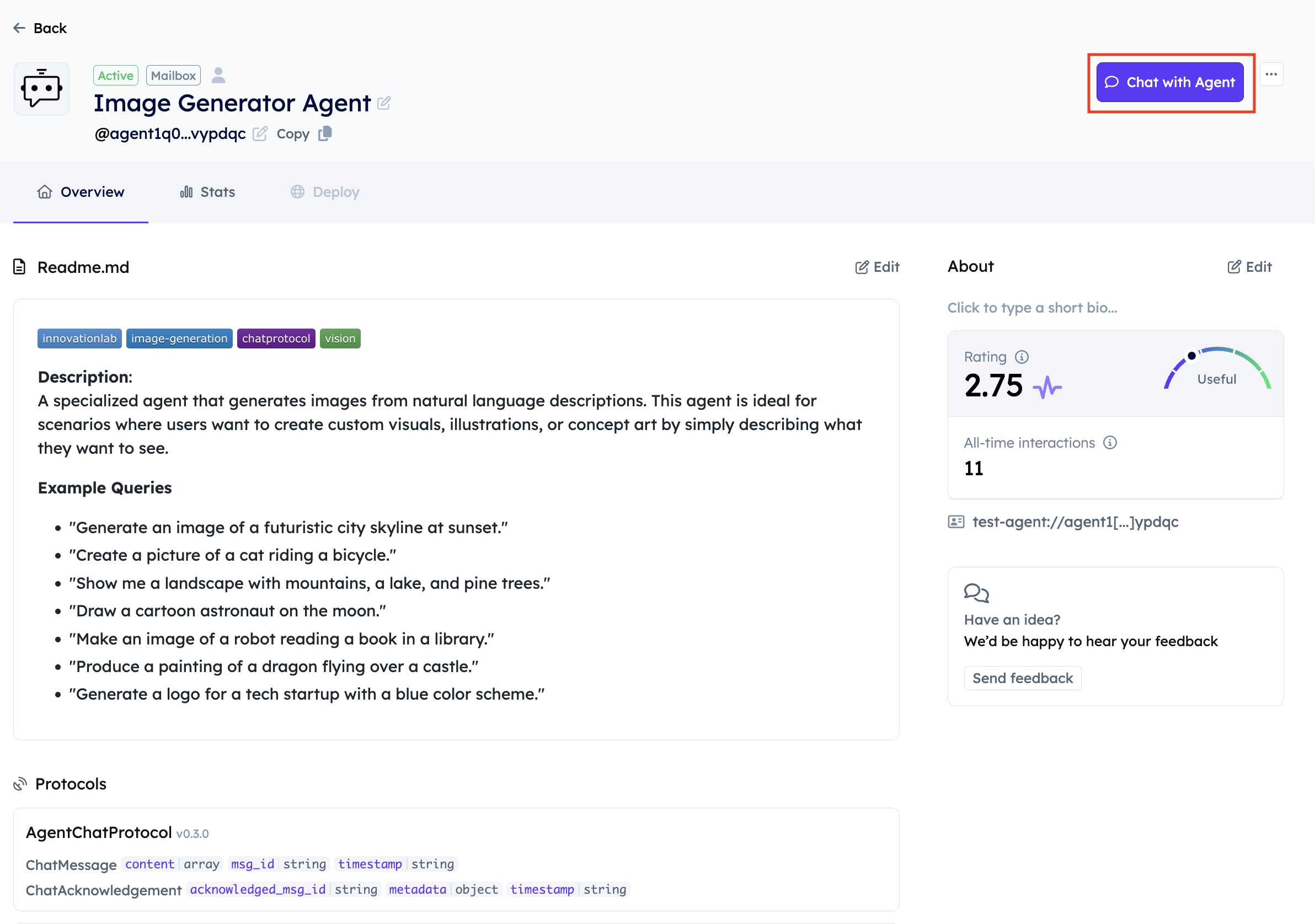

Adding a README to your Agent

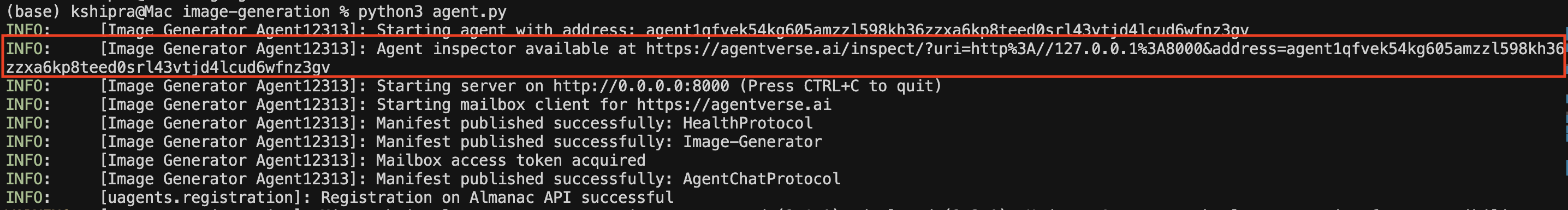

- Start your agent and connect to Agentverse using the Agent Inspector Link in the logs. Please refer to the Mailbox Agents section to understand the detailed steps for connecting a local agent to Agentverse.

python3 agent.py

Agent Logs

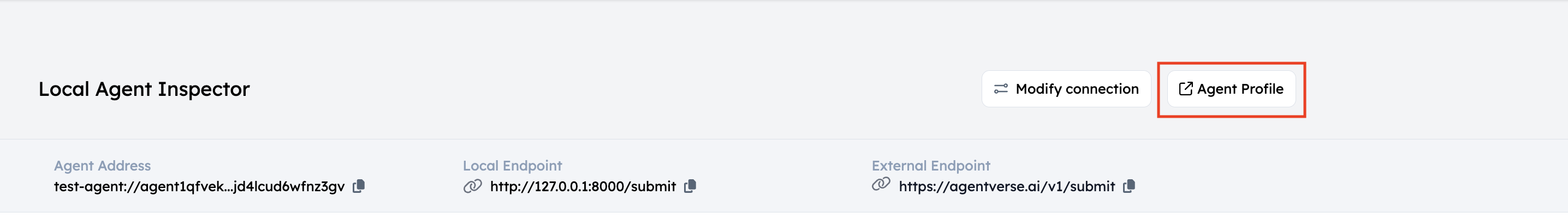

Click on the link, it will open a new window in your browser, click on Connect and then select Mailbox, this will connect your agent to Agentverse.

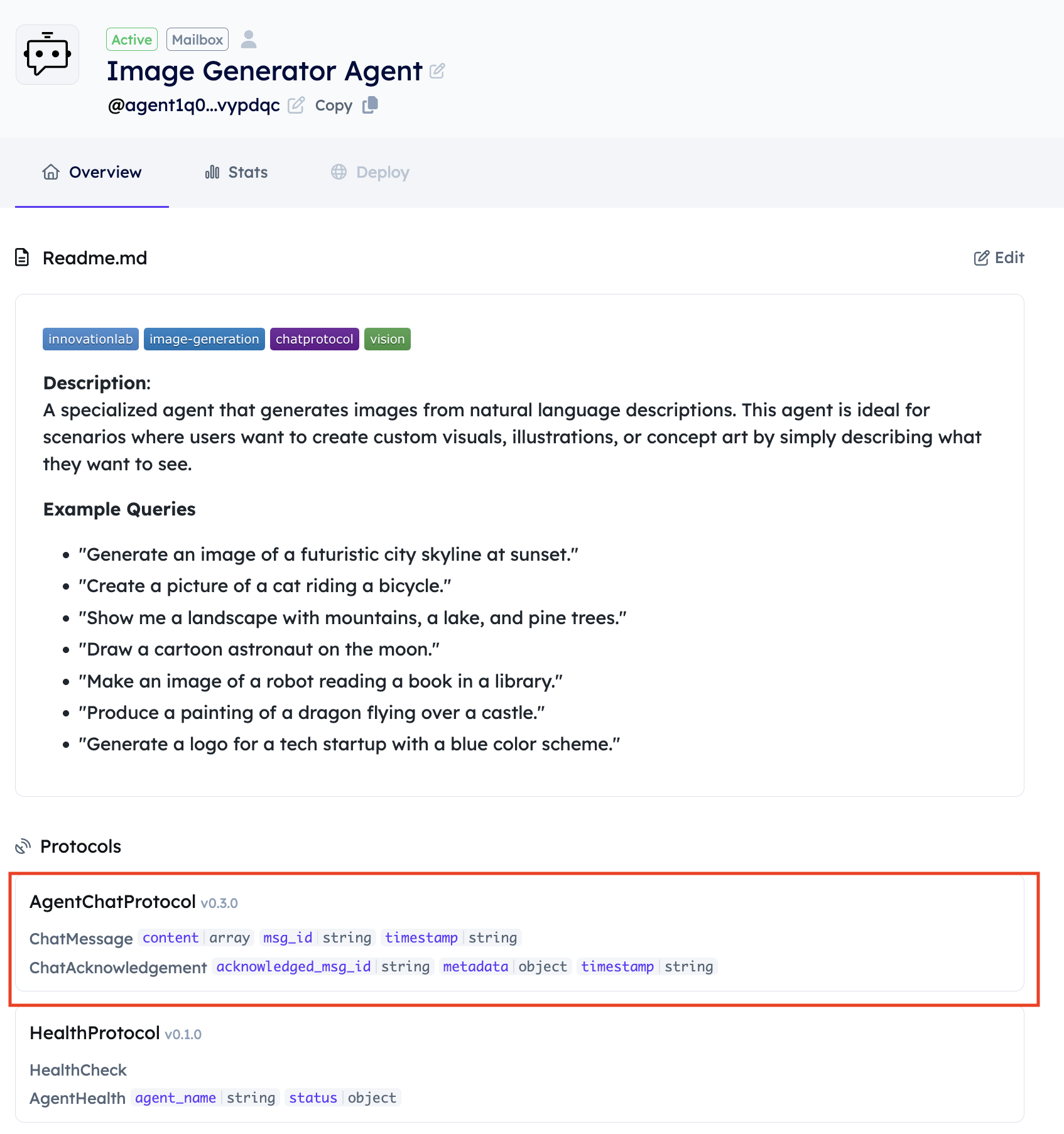

- Once you connect your Agent via Mailbox, click on Agent Profile and navigate to the Overview section of the Agent. Your Agent will appear under local agents on Agentverse.

-

Click on Edit and add a good description and name for your Agent so that it can be easily searchable by the ASI1 LLM. Please refer to the Importance of Good Readme section for more details.

-

Make sure the Agent has the right

AgentChatProtocol.

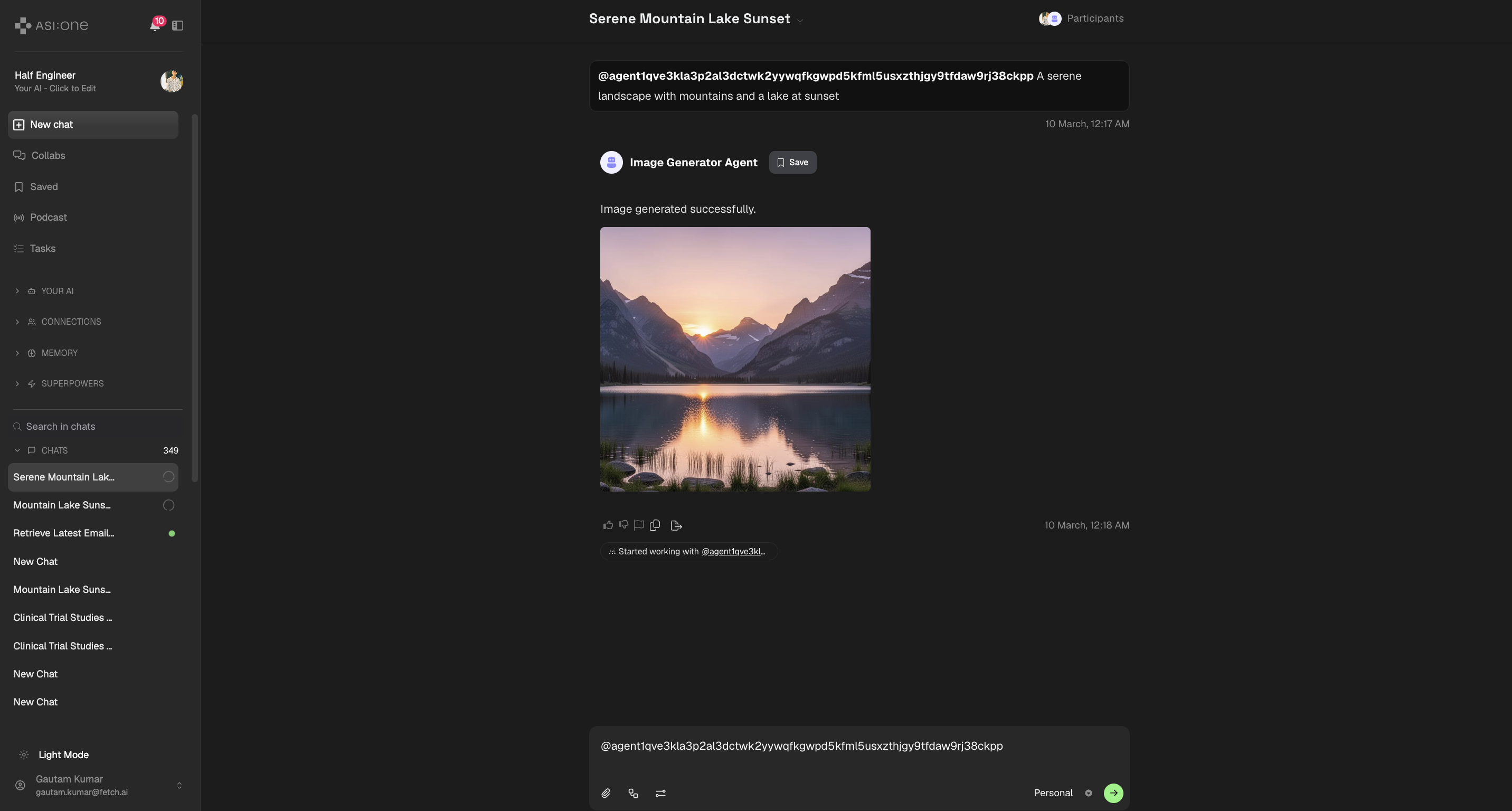

Query your Agent

-

Look for your mailbox agent under Agents tab on Agentverse.

-

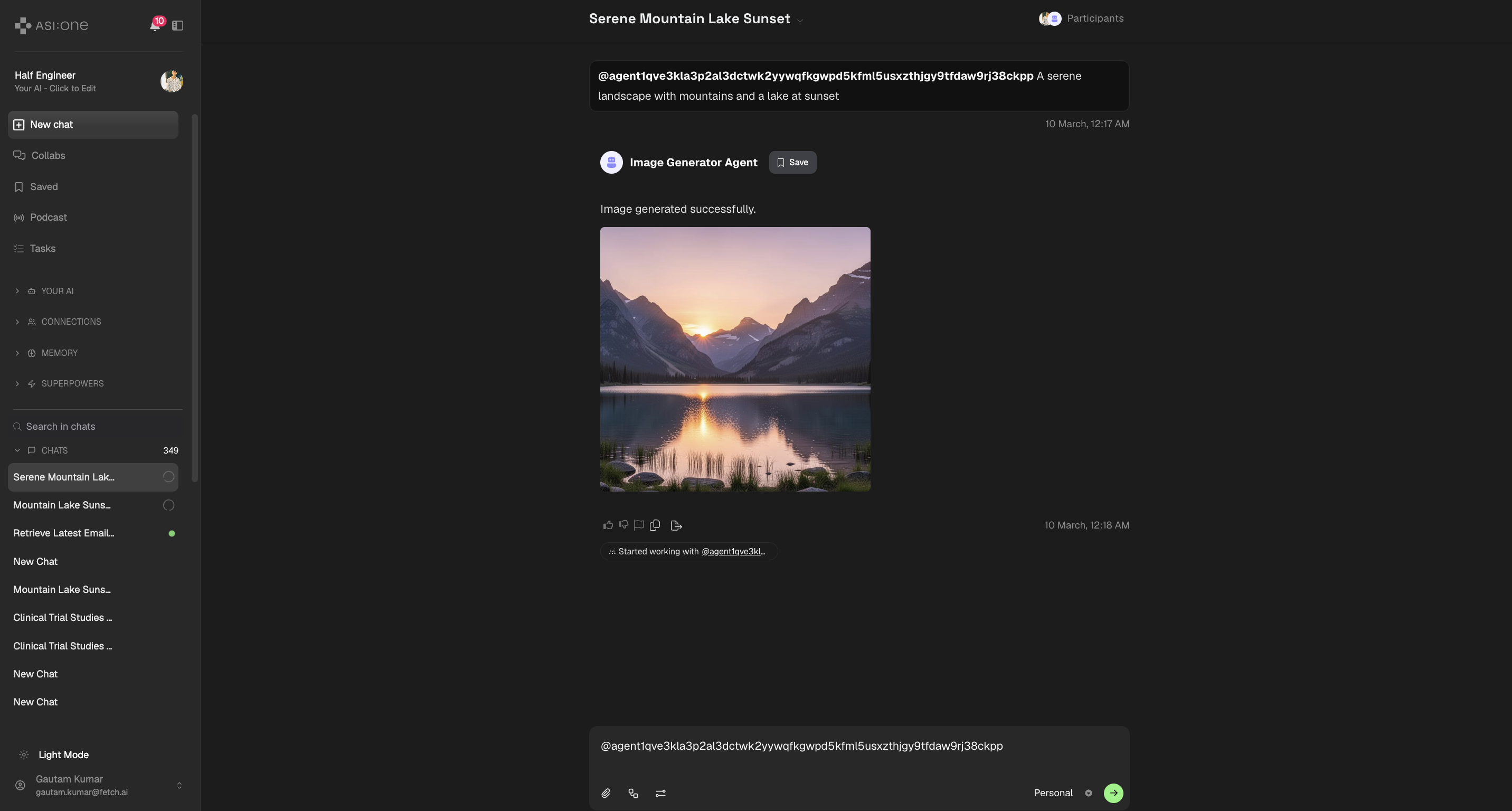

Open ASI:1 Chat and interact with the agent directly (make sure the agent is discoverable via Agentverse).

-

Type in your image description, for example: "A serene landscape with mountains and a lake at sunset"

-

The agent will generate an image based on your description and send it back through the chat interface.

Note: This example returns a Markdown image URL directly in chat responses.